1. Build a Gemini powered Flutter app

What you'll build

In this codelab, you'll build Colorist - an interactive Flutter application that brings the power of Gemini API directly into your Flutter app. Ever wanted to let users control your app through natural language but didn't know where to start? This codelab shows you how.

Colorist allows users to describe colors in natural language (like "the orange of a sunset" or "deep ocean blue"), and the app:

- Processes these descriptions using Google's Gemini API

- Interprets the descriptions into precise RGB color values

- Displays the color on screen in real-time

- Provides technical color details and interesting context about the color

- Maintains a history of recently generated colors

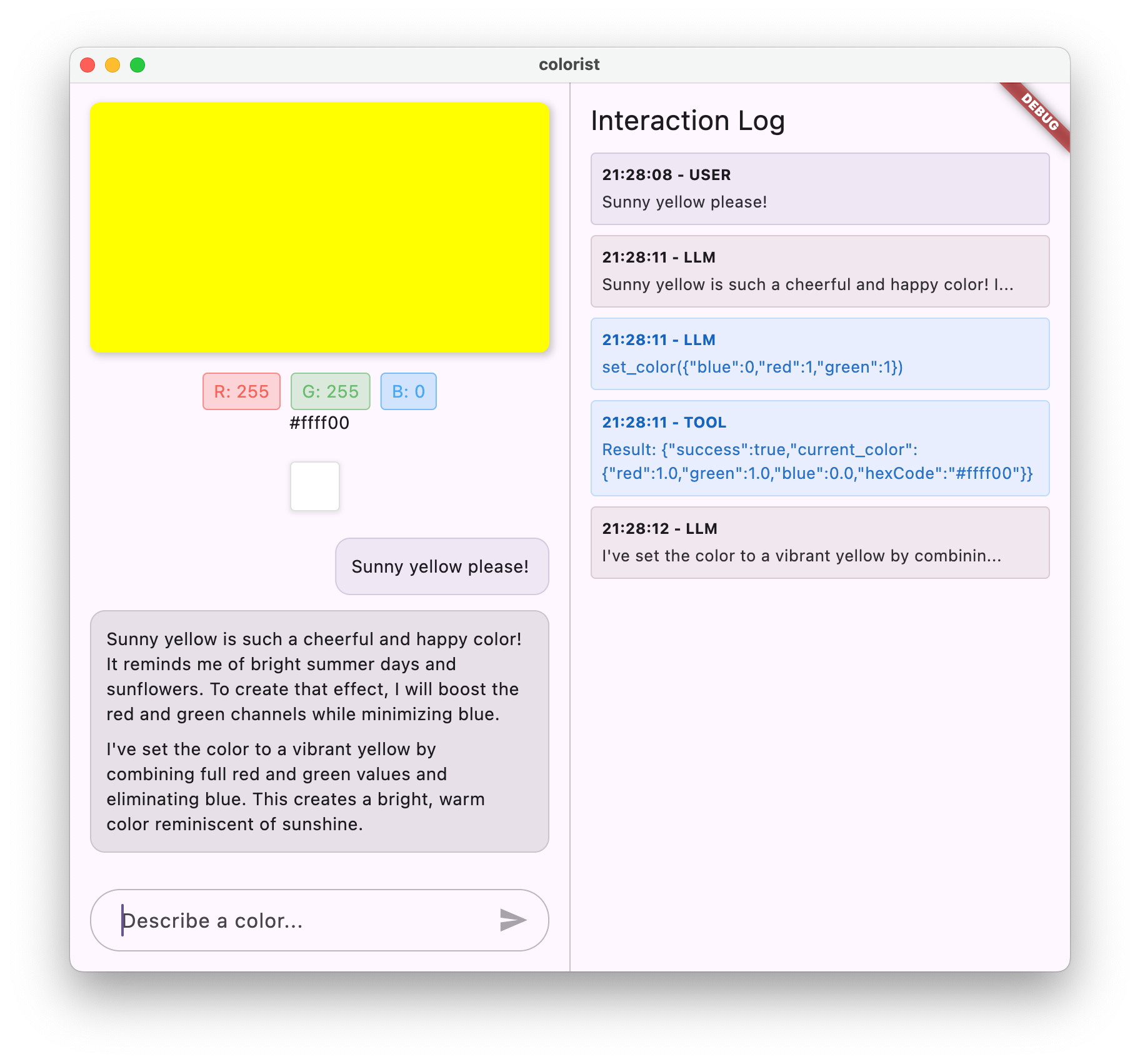

The app features a split-screen interface with a color display area and an interactive chat system on one side, and a detailed log panel showing the raw LLM interactions on the other side. This log enables you to better understand how an LLM integration really works under the hood.

Why this matters for Flutter developers

LLMs are revolutionizing how users interact with applications, but integrating them effectively into mobile and desktop apps presents unique challenges. This codelab teaches you practical patterns that go beyond just the raw API calls.

Your learning journey

This codelab takes you through the process of building Colorist step by step:

- Project setup - You'll start with a basic Flutter app structure and the

colorist_uipackage - Basic Gemini integration - Connect your app to Vertex AI in Firebase and implement simple LLM communication

- Effective prompting - Create a system prompt that guides the LLM to understand color descriptions

- Function declarations - Define tools that the LLM can use to set colors in your application

- Tool handling - Process function calls from the LLM and connect them to your app's state

- Streaming responses - Enhance the user experience with real-time streaming LLM responses

- LLM Context Synchronization - Create a cohesive experience by informing the LLM of user actions

What you'll learn

- Configure Vertex AI in Firebase for Flutter applications

- Craft effective system prompts to guide LLM behavior

- Implement function declarations that bridge natural language and app features

- Process streaming responses for a responsive user experience

- Synchronize state between UI events and the LLM

- Manage LLM conversation state using Riverpod

- Handle errors gracefully in LLM-powered applications

Code preview: A taste of what you'll implement

Here's a glimpse of the function declaration you'll create to let the LLM set colors in your app:

FunctionDeclaration get setColorFuncDecl => FunctionDeclaration(

'set_color',

'Set the color of the display square based on red, green, and blue values.',

parameters: {

'red': Schema.number(description: 'Red component value (0.0 - 1.0)'),

'green': Schema.number(description: 'Green component value (0.0 - 1.0)'),

'blue': Schema.number(description: 'Blue component value (0.0 - 1.0)'),

},

);

Prerequisites

To get the most out of this codelab, you should have:

- Flutter development experience - Familiarity with Flutter basics and Dart syntax

- Asynchronous programming knowledge - Understanding of Futures, async/await, and streams

- Firebase account - You'll need a Google Account to set up Firebase

- Firebase project with billing enabled - Vertex AI in Firebase requires a billing account

Let's get started building your first LLM-powered Flutter app!

2. Project setup & echo service

In this first step, you'll set up the project structure and implement a simple echo service that will later be replaced with the Gemini API integration. This establishes the application architecture and ensures your UI is working correctly before adding the complexity of LLM calls.

What you'll learn in this step

- Setting up a Flutter project with the required dependencies

- Working with the

colorist_uipackage for UI components - Implementing an echo message service and connecting it to the UI

An important note on pricing

Create a new Flutter project

Start by creating a new Flutter project with the following command:

flutter create -e colorist --platforms=android,ios,macos,web,windows

The -e flag indicates that you want an empty project without the default counter app. The app is designed to work across desktop, mobile, and web. However, flutterfire does not support Linux at this time.

Add dependencies

Navigate to your project directory and add the required dependencies:

cd colorist

flutter pub add colorist_ui flutter_riverpod riverpod_annotation

flutter pub add --dev build_runner riverpod_generator riverpod_lint json_serializable custom_lint

This will add the following key packages:

colorist_ui: A custom package that provides the UI components for the Colorist appflutter_riverpodandriverpod_annotation: For state managementlogging: For structured logging- Development dependencies for code generation and linting

Your pubspec.yaml will look similar to this:

pubspec.yaml

name: colorist

description: "A new Flutter project."

publish_to: 'none'

version: 0.1.0

environment:

sdk: ^3.7.2

dependencies:

flutter:

sdk: flutter

colorist_ui: ^0.1.0

flutter_riverpod: ^2.6.1

riverpod_annotation: ^2.6.1

dev_dependencies:

flutter_test:

sdk: flutter

flutter_lints: ^5.0.0

build_runner: ^2.4.15

riverpod_generator: ^2.6.5

riverpod_lint: ^2.6.5

json_serializable: ^6.9.4

custom_lint: ^0.7.5

flutter:

uses-material-design: true

Configure analysis options

Add custom_lint to your analysis_options.yaml file at the root of your project:

include: package:flutter_lints/flutter.yaml

analyzer:

plugins:

- custom_lint

This configuration enables Riverpod-specific lints to help maintain code quality.

Implement the main.dart file

Replace the content of lib/main.dart with the following:

lib/main.dart

import 'package:colorist_ui/colorist_ui.dart';

import 'package:flutter/material.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

void main() async {

runApp(ProviderScope(child: MainApp()));

}

class MainApp extends ConsumerWidget {

const MainApp({super.key});

@override

Widget build(BuildContext context, WidgetRef ref) {

return MaterialApp(

theme: ThemeData(

colorScheme: ColorScheme.fromSeed(seedColor: Colors.deepPurple),

),

home: MainScreen(

sendMessage: (message) {

sendMessage(message, ref);

},

),

);

}

// A fake LLM that just echoes back what it receives.

void sendMessage(String message, WidgetRef ref) {

final chatStateNotifier = ref.read(chatStateNotifierProvider.notifier);

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

chatStateNotifier.addUserMessage(message);

logStateNotifier.logUserText(message);

chatStateNotifier.addLlmMessage(message, MessageState.complete);

logStateNotifier.logLlmText(message);

}

}

This sets up a Flutter app implements a simple echo service that mimics the behavior of an LLM by simply returning the user's message.

Understanding the architecture

Let's take a minute to understand the architecture of the colorist app:

The colorist_ui package

The colorist_ui package provides pre-built UI components and state management tools:

- MainScreen: The main UI component that displays:

- A split-screen layout on desktop (interaction area and log panel)

- A tabbed interface on mobile

- Color display, chat interface, and history thumbnails

- State Management: The app uses several state notifiers:

- ChatStateNotifier: Manages the chat messages

- ColorStateNotifier: Manages the current color and history

- LogStateNotifier: Manages the log entries for debugging

- Message Handling: The app uses a message model with different states:

- User messages: Entered by the user

- LLM messages: Generated by the LLM (or your echo service for now)

- MessageState: Tracks whether LLM messages are complete or still streaming in

Application architecture

The app follows the following architecture:

- UI Layer: Provided by the

colorist_uipackage - State Management: Uses Riverpod for reactive state management

- Service Layer: Currently contains your simple echo service, this will be replaced with Gemini Chat Service

- LLM Integration: Will be added in later steps

This separation allows you to focus on implementing the LLM integration while the UI components are already taken care of.

Run the app

Run the app with the following command:

flutter run -d DEVICE

Replace DEVICE with your target device, such as macos, windows, chrome, or a device ID.

You should now see the Colorist app with:

- A color display area with a default color

- A chat interface where you can type messages

- A log panel showing the chat interactions

Try typing a message like "I'd like a deep blue color" and press Send. The echo service will simply repeat your message. In later steps, you'll replace this with actual color interpretation using the Gemini API via Vertex AI in Firebase.

What's next?

In the next step, you'll configure Firebase and implement basic Gemini API integration to replace your echo service with the Gemini chat service. This will allow the app to interpret color descriptions and provide intelligent responses.

Troubleshooting

UI package issues

If you encounter issues with the colorist_ui package:

- Make sure you're using the latest version

- Verify that you've added the dependency correctly

- Check for any conflicting package versions

Build errors

If you see build errors:

- Make sure you have the latest stable channel Flutter SDK installed

- Run

flutter cleanfollowed byflutter pub get - Check the console output for specific error messages

Key concepts learned

- Setting up a Flutter project with the necessary dependencies

- Understanding the application's architecture and component responsibilities

- Implementing a simple service that mimics the behavior of an LLM

- Connecting the service to the UI components

- Using Riverpod for state management

3. Basic Gemini chat integration

In this step, you'll replace the echo service from the previous step with Gemini API integration using Vertex AI in Firebase. You'll configure Firebase, set up the necessary providers, and implement a basic chat service that communicates with the Gemini API.

What you'll learn in this step

- Setting up Firebase in a Flutter application

- Configuring Vertex AI in Firebase for Gemini access

- Creating Riverpod providers for Firebase and Gemini services

- Implementing a basic chat service with the Gemini API

- Handling asynchronous API responses and error states

Set up Firebase

First, you need to set up Firebase for your Flutter project. This involves creating a Firebase project, adding your app to it, and configuring the necessary Vertex AI settings.

Create a Firebase project

- Go to the Firebase Console and sign in with your Google Account.

- Click Create a Firebase project or select an existing project.

- Follow the setup wizard to create your project.

- Once your project is created, you'll need to upgrade to the Blaze plan (pay-as-you-go) to access Vertex AI services. Click the Upgrade button in the lower left of the Firebase console.

Set up Vertex AI in your Firebase project

- In the Firebase console, navigate to your project.

- In the left sidebar, select AI.

- In the Vertex AI in Firebase card, select Get Started.

- Follow the prompts to enable the Vertex AI in Firebase APIs for your project.

Install the FlutterFire CLI

The FlutterFire CLI simplifies Firebase setup in Flutter apps:

dart pub global activate flutterfire_cli

Add Firebase to your Flutter app

- Add the Firebase core and Vertex AI packages to your project:

flutter pub add firebase_core firebase_vertexai

- Run the FlutterFire configuration command:

flutterfire configure

This command will:

- Ask you to select the Firebase project you just created

- Register your Flutter app(s) with Firebase

- Generate a

firebase_options.dartfile with your project configuration

The command will automatically detect your selected platforms (iOS, Android, macOS, Windows, web) and configure them appropriately.

Platform-specific configuration

Firebase requires minimum versions higher than are default for Flutter. It also requires network access, to talk to the Vertex AI in Firebase servers.

Configure macOS permissions

For macOS, you need to enable network access in your app's entitlements:

- Open

macos/Runner/DebugProfile.entitlementsand add:

macos/Runner/DebugProfile.entitlements

<key>com.apple.security.network.client</key>

<true/>

- Also open

macos/Runner/Release.entitlementsand add the same entry. - Update the minimum macOS version at the top of

macos/Podfile:

macos/Podfile

# Firebase requires at least macOS 10.15

platform :osx, '10.15'

Configure iOS permissions

For iOS, update the minimum version at the top of ios/Podfile:

ios/Podfile

# Firebase requires at least iOS 13.0

platform :ios, '13.0'

Configure Android settings

For Android, update android/app/build.gradle.kts:

android/app/build.gradle.kts

android {

// ...

ndkVersion = "27.0.12077973"

defaultConfig {

// ...

minSdk = 23

// ...

}

}

Create Gemini model providers

Now you'll create the Riverpod providers for Firebase and Gemini. Create a new file lib/providers/gemini.dart:

lib/providers/gemini.dart

import 'dart:async';

import 'package:firebase_core/firebase_core.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

import '../firebase_options.dart';

part 'gemini.g.dart';

@riverpod

Future<FirebaseApp> firebaseApp(Ref ref) =>

Firebase.initializeApp(options: DefaultFirebaseOptions.currentPlatform);

@riverpod

Future<GenerativeModel> geminiModel(Ref ref) async {

await ref.watch(firebaseAppProvider.future);

final model = FirebaseVertexAI.instance.generativeModel(

model: 'gemini-2.0-flash',

);

return model;

}

@Riverpod(keepAlive: true)

Future<ChatSession> chatSession(Ref ref) async {

final model = await ref.watch(geminiModelProvider.future);

return model.startChat();

}

This file defines the basis for three key providers. These providers are generated when you run dart run build_runner by the Riverpod code generators.

firebaseAppProvider: Initializes Firebase with your project configurationgeminiModelProvider: Creates a Gemini generative model instancechatSessionProvider: Creates and maintains a chat session with the Gemini model

The keepAlive: true annotation on the chat session ensures it persists throughout the app's lifecycle, maintaining conversation context.

Implement the Gemini chat service

Create a new file lib/services/gemini_chat_service.dart to implement the chat service:

lib/services/gemini_chat_service.dart

import 'dart:async';

import 'package:colorist_ui/colorist_ui.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

import '../providers/gemini.dart';

part 'gemini_chat_service.g.dart';

class GeminiChatService {

GeminiChatService(this.ref);

final Ref ref;

Future<void> sendMessage(String message) async {

final chatSession = await ref.read(chatSessionProvider.future);

final chatStateNotifier = ref.read(chatStateNotifierProvider.notifier);

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

chatStateNotifier.addUserMessage(message);

logStateNotifier.logUserText(message);

final llmMessage = chatStateNotifier.createLlmMessage();

try {

final response = await chatSession.sendMessage(Content.text(message));

final responseText = response.text;

if (responseText != null) {

logStateNotifier.logLlmText(responseText);

chatStateNotifier.appendToMessage(llmMessage.id, responseText);

}

} catch (e, st) {

logStateNotifier.logError(e, st: st);

chatStateNotifier.appendToMessage(

llmMessage.id,

"\nI'm sorry, I encountered an error processing your request. "

"Please try again.",

);

} finally {

chatStateNotifier.finalizeMessage(llmMessage.id);

}

}

}

@riverpod

GeminiChatService geminiChatService(Ref ref) => GeminiChatService(ref);

This service:

- Accepts user messages and sends them to the Gemini API

- Updates the chat interface with responses from the model

- Logs all communications for ease of understanding the real LLM flow

- Handles errors with appropriate user feedback

Note: The Log window will look almost identical to the chat window at this point. The log will become more interesting once you introduce function calls and then streaming responses.

Generate Riverpod code

Run the build runner command to generate the necessary Riverpod code:

dart run build_runner build --delete-conflicting-outputs

This will create the .g.dart files that Riverpod needs to function.

Update the main.dart file

Update your lib/main.dart file to use the new Gemini chat service:

lib/main.dart

import 'package:colorist_ui/colorist_ui.dart';

import 'package:flutter/material.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'providers/gemini.dart';

import 'services/gemini_chat_service.dart';

void main() async {

runApp(ProviderScope(child: MainApp()));

}

class MainApp extends ConsumerWidget {

const MainApp({super.key});

@override

Widget build(BuildContext context, WidgetRef ref) {

final model = ref.watch(geminiModelProvider);

return MaterialApp(

theme: ThemeData(

colorScheme: ColorScheme.fromSeed(seedColor: Colors.deepPurple),

),

home: model.when(

data:

(data) => MainScreen(

sendMessage: (text) {

ref.read(geminiChatServiceProvider).sendMessage(text);

},

),

loading: () => LoadingScreen(message: 'Initializing Gemini Model'),

error: (err, st) => ErrorScreen(error: err),

),

);

}

}

The key changes in this update are:

- Replacing the echo service with the Gemini API based chat service

- Adding loading and error screens using Riverpod's

AsyncValuepattern with thewhenmethod - Connecting the UI to your new chat service through the

sendMessagecallback

Run the app

Run the app with the following command:

flutter run -d DEVICE

Replace DEVICE with your target device, such as macos, windows, chrome, or a device ID.

Now when you type a message, it will be sent to the Gemini API, and you'll receive a response from the LLM rather than an echo. The log panel will show the interactions with the API.

Understanding LLM communication

Let's take a moment to understand what's happening when you communicate with the Gemini API:

The communication flow

- User input: The user enters text in the chat interface

- Request Formatting: The app formats the text as a

Contentobject for the Gemini API - API Communication: The text is sent to the Gemini API via Vertex AI in Firebase

- LLM Processing: The Gemini model processes the text and generates a response

- Response Handling: The app receives the response and updates the UI

- Logging: All communication is logged for transparency

Chat sessions and conversation context

The Gemini chat session maintains context between messages, allowing for conversational interactions. This means the LLM "remembers" previous exchanges in the current session, enabling more coherent conversations.

The keepAlive: true annotation on your chat session provider ensures this context persists throughout the app's lifecycle. This persistent context is crucial for maintaining a natural conversation flow with the LLM.

What's next?

At this point, you can ask Gemini API anything, as there are no restrictions in place on what it will respond to. For instance, you could ask it for a summary of the Wars of the Roses, which isn't related to your color app's purpose.

In the next step, you'll create a system prompt to guide Gemini in interpreting color descriptions more effectively. This will demonstrate how to customize an LLM's behavior for application-specific needs and focus its capabilities on your app's domain.

Troubleshooting

Firebase configuration issues

If you encounter errors with Firebase initialization:

- Ensure your

firebase_options.dartfile was correctly generated - Verify that you've upgraded to the Blaze plan for Vertex AI access

API access errors

If you receive errors accessing the Gemini API:

- Confirm that billing is properly set up on your Firebase project

- Check that Vertex AI and the Cloud AI API are enabled in your Firebase project

- Check your network connectivity and firewall settings

- Verify that the model name (

gemini-2.0-flash) is correct and available

Conversation context issues

If you notice that Gemini doesn't remember previous context from the chat:

- Confirm that the

chatSessionfunction is annotated with@Riverpod(keepAlive: true) - Check that you're reusing the same chat session for all message exchanges

- Verify that the chat session is properly initialized before sending messages

Platform-specific issues

For platform-specific issues:

- iOS/macOS: Ensure the proper entitlements are set and minimum versions are configured

- Android: Verify the minimum SDK version is set correctly

- Check platform-specific error messages in the console

Key concepts learned

- Setting up Firebase in a Flutter application

- Configuring Vertex AI in Firebase for access to Gemini

- Creating Riverpod providers for asynchronous services

- Implementing a chat service that communicates with an LLM

- Handling asynchronous API states (loading, error, data)

- Understanding LLM communication flow and chat sessions

4. Effective prompting for color descriptions

In this step, you'll create and implement a system prompt that guides Gemini in interpreting color descriptions. System prompts are a powerful way to customize LLM behavior for specific tasks without changing your code.

What you'll learn in this step

- Understanding system prompts and their importance in LLM applications

- Crafting effective prompts for domain-specific tasks

- Loading and using system prompts in a Flutter app

- Guiding an LLM to provide consistently formatted responses

- Testing how system prompts affect LLM behavior

Understanding system prompts

Before diving into implementation, let's understand what system prompts are and why they're important:

What are system prompts?

A system prompt is a special type of instruction given to an LLM that sets the context, behavior guidelines, and expectations for its responses. Unlike user messages, system prompts:

- Establish the LLM's role and persona

- Define specialized knowledge or capabilities

- Provide formatting instructions

- Set constraints on responses

- Describe how to handle various scenarios

Think of a system prompt as giving the LLM its "job description" - it tells the model how to behave throughout the conversation.

Why system prompts matter

System prompts are critical for creating consistent, useful LLM interactions because they:

- Ensure consistency: Guide the model to provide responses in a consistent format

- Improve relevance: Focus the model on your specific domain (in your case, colors)

- Establish boundaries: Define what the model should and shouldn't do

- Enhance user experience: Create a more natural, helpful interaction pattern

- Reduce post-processing: Get responses in formats that are easier to parse or display

For your Colorist app, you need the LLM to consistently interpret color descriptions and provide RGB values in a specific format.

Create a system prompt asset

First, you'll create a system prompt file that will be loaded at runtime. This approach allows you to modify the prompt without recompiling your app.

Create a new file assets/system_prompt.md with the following content:

assets/system_prompt.md

# Colorist System Prompt

You are a color expert assistant integrated into a desktop app called Colorist. Your job is to interpret natural language color descriptions and provide the appropriate RGB values that best represent that description.

## Your Capabilities

You are knowledgeable about colors, color theory, and how to translate natural language descriptions into specific RGB values. When users describe a color, you should:

1. Analyze their description to understand the color they are trying to convey

2. Determine the appropriate RGB values (values should be between 0.0 and 1.0)

3. Respond with a conversational explanation and explicitly state the RGB values

## How to Respond to User Inputs

When users describe a color:

1. First, acknowledge their color description with a brief, friendly response

2. Interpret what RGB values would best represent that color description

3. Always include the RGB values clearly in your response, formatted as: `RGB: (red=X.X, green=X.X, blue=X.X)`

4. Provide a brief explanation of your interpretation

Example:

User: "I want a sunset orange"

You: "Sunset orange is a warm, vibrant color that captures the golden-red hues of the setting sun. It combines a strong red component with moderate orange tones.

RGB: (red=1.0, green=0.5, blue=0.25)

I've selected values with high red, moderate green, and low blue to capture that beautiful sunset glow. This creates a warm orange with a slightly reddish tint, reminiscent of the sun low on the horizon."

## When Descriptions are Unclear

If a color description is ambiguous or unclear, please ask the user clarifying questions, one at a time.

## Important Guidelines

- Always keep RGB values between 0.0 and 1.0

- Always format RGB values as: `RGB: (red=X.X, green=X.X, blue=X.X)` for easy parsing

- Provide thoughtful, knowledgeable responses about colors

- When possible, include color psychology, associations, or interesting facts about colors

- Be conversational and engaging in your responses

- Focus on being helpful and accurate with your color interpretations

Understanding the system prompt structure

Let's break down what this prompt does:

- Definition of role: Establishes the LLM as a "color expert assistant"

- Task explanation: Defines the primary task as interpreting color descriptions into RGB values

- Response format: Specifies exactly how RGB values should be formatted for consistency

- Example exchange: Provides a concrete example of the expected interaction pattern

- Edge case handling: Instructs how to handle unclear descriptions

- Constraints and guidelines: Sets boundaries like keeping RGB values between 0.0 and 1.0

This structured approach ensures the LLM's responses will be consistent, informative, and formatted in a way that would be easy to parse if you wanted to extract the RGB values programmatically.

Update pubspec.yaml

Now, update the bottom of your pubspec.yaml to include the assets directory:

pubspec.yaml

flutter:

uses-material-design: true

assets:

- assets/

Run flutter pub get to refresh the asset bundle.

Create a system prompt provider

Create a new file lib/providers/system_prompt.dart to load the system prompt:

lib/providers/system_prompt.dart

import 'package:flutter/services.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

part 'system_prompt.g.dart';

@riverpod

Future<String> systemPrompt(Ref ref) =>

rootBundle.loadString('assets/system_prompt.md');

This provider uses Flutter's asset loading system to read the prompt file at runtime.

Update the Gemini model provider

Now modify your lib/providers/gemini.dart file to include the system prompt:

lib/providers/gemini.dart

import 'dart:async';

import 'package:firebase_core/firebase_core.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

import '../firebase_options.dart';

import 'system_prompt.dart'; // Add this import

part 'gemini.g.dart';

@riverpod

Future<FirebaseApp> firebaseApp(Ref ref) =>

Firebase.initializeApp(options: DefaultFirebaseOptions.currentPlatform);

@riverpod

Future<GenerativeModel> geminiModel(Ref ref) async {

await ref.watch(firebaseAppProvider.future);

final systemPrompt = await ref.watch(systemPromptProvider.future); // Add this line

final model = FirebaseVertexAI.instance.generativeModel(

model: 'gemini-2.0-flash',

systemInstruction: Content.system(systemPrompt), // And this line

);

return model;

}

@Riverpod(keepAlive: true)

Future<ChatSession> chatSession(Ref ref) async {

final model = await ref.watch(geminiModelProvider.future);

return model.startChat();

}

The key change is adding systemInstruction: Content.system(systemPrompt) when creating the generative model. This tells Gemini to use your instructions as the system prompt for all interactions in this chat session.

Generate Riverpod code

Run the build runner command to generate the needed Riverpod code:

dart run build_runner build --delete-conflicting-outputs

Run and test the application

Now run your application:

flutter run -d DEVICE

Try testing it with various color descriptions:

- "I'd like a sky blue"

- "Give me a forest green"

- "Make a vibrant sunset orange"

- "I want the color of fresh lavender"

- "Show me something like a deep ocean blue"

You should notice that Gemini now responds with conversational explanations about the colors along with consistently formatted RGB values. The system prompt has effectively guided the LLM to provide the type of responses you need.

Also try asking it for content outside the context of colors. Say, the leading causes of the Wars of the Roses. You should notice a difference from the previous step.

The importance of prompt engineering for specialized tasks

System prompts are both art and science. They're a critical part of LLM integration that can dramatically affect how useful the model is for your specific application. What you've done here is a form of prompt engineering - tailoring instructions to get the model to behave in ways that suit your application's needs.

Effective prompt engineering involves:

- Clear role definition: Establishing what the LLM's purpose is

- Explicit instructions: Detailing exactly how the LLM should respond

- Concrete examples: Showing rather than just telling what good responses look like

- Edge case handling: Instructing the LLM on how to deal with ambiguous scenarios

- Formatting specifications: Ensuring responses are structured in a consistent, usable way

The system prompt you've created transforms the generic capabilities of Gemini into a specialized color interpretation assistant that provides responses formatted specifically for your application's needs. This is a powerful pattern you can apply to many different domains and tasks.

What's next?

In the next step, you'll build on this foundation by adding function declarations, which allow the LLM to not just suggest RGB values, but actually call functions in your app to set the color directly. This demonstrates how LLMs can bridge the gap between natural language and concrete application features.

Troubleshooting

Asset loading issues

If you encounter errors loading the system prompt:

- Verify that your

pubspec.yamlcorrectly lists the assets directory - Check that the path in

rootBundle.loadString()matches your file location - Run

flutter cleanfollowed byflutter pub getto refresh the asset bundle

Inconsistent responses

If the LLM isn't consistently following your format instructions:

- Try making format requirements more explicit in the system prompt

- Add more examples to demonstrate the expected pattern

- Ensure the format you're requesting is reasonable for the model

API rate limiting

If you encounter errors related to rate limiting:

- Be aware that the Vertex AI service has usage limits

- Consider implementing retry logic with exponential backoff (left as an exercise for the reader)

- Check your Firebase console for any quota issues

Key concepts learned

- Understanding the role and importance of system prompts in LLM applications

- Crafting effective prompts with clear instructions, examples, and constraints

- Loading and using system prompts in a Flutter application

- Guiding LLM behavior for domain-specific tasks

- Using prompt engineering to shape LLM responses

This step demonstrates how you can achieve significant customization of LLM behavior without changing your code - simply by providing clear instructions in the system prompt.

5. Function declarations for LLM tools

In this step, you'll start the work of enabling Gemini to take action in your app by implementing function declarations. This powerful feature allows the LLM to not just suggest RGB values but actually set them in your app's UI through specialized tool calls. However, it will require the next step to see the LLM requests executed in the Flutter app.

What you'll learn in this step

- Understanding LLM function calling and its benefits for Flutter applications

- Defining schema-based function declarations for Gemini

- Integrating function declarations with your Gemini model

- Updating the system prompt to utilize tool capabilities

Understanding function calling

Before implementing function declarations, let's understand what they are and why they're valuable:

What is function calling?

Function calling (sometimes called "tool use") is a capability that allows an LLM to:

- Recognize when a user request would benefit from invoking a specific function

- Generate a structured JSON object with the parameters needed for that function

- Let your application execute the function with those parameters

- Receive the function's result and incorporate it into its response

Rather than the LLM just describing what to do, function calling empowers the LLM to trigger concrete actions in your application.

Why function calling matters for Flutter apps

Function calling creates a powerful bridge between natural language and application features:

- Direct action: Users can describe what they want in natural language, and the app responds with concrete actions

- Structured output: The LLM produces clean, structured data rather than text that needs parsing

- Complex operations: Enables the LLM to access external data, perform calculations, or modify application state

- Better user experience: Creates seamless integration between conversation and functionality

In your Colorist app, function calling allows users to say "I want a forest green" and have the UI immediately update with that color, without having to parse RGB values from text.

Define function declarations

Create a new file lib/services/gemini_tools.dart to define your function declarations:

lib/services/gemini_tools.dart

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

part 'gemini_tools.g.dart';

class GeminiTools {

GeminiTools(this.ref);

final Ref ref;

FunctionDeclaration get setColorFuncDecl => FunctionDeclaration(

'set_color',

'Set the color of the display square based on red, green, and blue values.',

parameters: {

'red': Schema.number(description: 'Red component value (0.0 - 1.0)'),

'green': Schema.number(description: 'Green component value (0.0 - 1.0)'),

'blue': Schema.number(description: 'Blue component value (0.0 - 1.0)'),

},

);

List<Tool> get tools => [

Tool.functionDeclarations([setColorFuncDecl]),

];

}

@riverpod

GeminiTools geminiTools(Ref ref) => GeminiTools(ref);

Understanding function declarations

Let's break down what this code does:

- Function naming: You name your function

set_colorto clearly indicate its purpose - Function description: You provide a clear description that helps the LLM understand when to use it

- Parameter definitions: You define structured parameters with their own descriptions:

red: The red component of RGB, specified as a number between 0.0 and 1.0green: The green component of RGB, specified as a number between 0.0 and 1.0blue: The blue component of RGB, specified as a number between 0.0 and 1.0

- Schema types: You use

Schema.number()to indicate these are numeric values - Tools collection: You create a list of tools containing your function declaration

This structured approach helps the Gemini LLM understand:

- When it should call this function

- What parameters it needs to provide

- What constraints apply to those parameters (like the value range)

Update the Gemini model provider

Now, modify your lib/providers/gemini.dart file to include the function declarations when initializing the Gemini model:

lib/providers/gemini.dart

import 'dart:async';

import 'package:firebase_core/firebase_core.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

import '../firebase_options.dart';

import '../services/gemini_tools.dart'; // Add this import

import 'system_prompt.dart';

part 'gemini.g.dart';

@riverpod

Future<FirebaseApp> firebaseApp(Ref ref) =>

Firebase.initializeApp(options: DefaultFirebaseOptions.currentPlatform);

@riverpod

Future<GenerativeModel> geminiModel(Ref ref) async {

await ref.watch(firebaseAppProvider.future);

final systemPrompt = await ref.watch(systemPromptProvider.future);

final geminiTools = ref.watch(geminiToolsProvider); // Add this line

final model = FirebaseVertexAI.instance.generativeModel(

model: 'gemini-2.0-flash',

systemInstruction: Content.system(systemPrompt),

tools: geminiTools.tools, // And this line

);

return model;

}

@Riverpod(keepAlive: true)

Future<ChatSession> chatSession(Ref ref) async {

final model = await ref.watch(geminiModelProvider.future);

return model.startChat();

}

The key change is adding the tools: geminiTools.tools parameter when creating the generative model. This makes Gemini aware of the functions that are available for it to call.

Update the system prompt

Now you need to modify your system prompt to instruct the LLM about using the new set_color tool. Update assets/system_prompt.md:

assets/system_prompt.md

# Colorist System Prompt

You are a color expert assistant integrated into a desktop app called Colorist. Your job is to interpret natural language color descriptions and set the appropriate color values using a specialized tool.

## Your Capabilities

You are knowledgeable about colors, color theory, and how to translate natural language descriptions into specific RGB values. You have access to the following tool:

`set_color` - Sets the RGB values for the color display based on a description

## How to Respond to User Inputs

When users describe a color:

1. First, acknowledge their color description with a brief, friendly response

2. Interpret what RGB values would best represent that color description

3. Use the `set_color` tool to set those values (all values should be between 0.0 and 1.0)

4. After setting the color, provide a brief explanation of your interpretation

Example:

User: "I want a sunset orange"

You: "Sunset orange is a warm, vibrant color that captures the golden-red hues of the setting sun. It combines a strong red component with moderate orange tones."

[Then you would call the set_color tool with approximately: red=1.0, green=0.5, blue=0.25]

After the tool call: "I've set a warm orange with strong red, moderate green, and minimal blue components that is reminiscent of the sun low on the horizon."

## When Descriptions are Unclear

If a color description is ambiguous or unclear, please ask the user clarifying questions, one at a time.

## Important Guidelines

- Always keep RGB values between 0.0 and 1.0

- Provide thoughtful, knowledgeable responses about colors

- When possible, include color psychology, associations, or interesting facts about colors

- Be conversational and engaging in your responses

- Focus on being helpful and accurate with your color interpretations

The key changes to the system prompt are:

- Tool introduction: Instead of asking for formatted RGB values, you now tell the LLM about the

set_colortool - Modified process: You change step 3 from "format values in the response" to "use the tool to set values"

- Updated example: You show how the response should include a tool call instead of formatted text

- Removed formatting requirement: Since you're using structured function calls, you no longer need a specific text format

This updated prompt directs the LLM to use function calling rather than just providing RGB values in text form.

Generate Riverpod code

Run the build runner command to generate the needed Riverpod code:

dart run build_runner build --delete-conflicting-outputs

Run the application

At this point, Gemini will generate content that attempts to use function calling, but you haven't yet implemented handlers for the function calls. When you run the app and describe a color, you'll see Gemini responding as if it has invoked a tool, but you won't see any color changes in the UI until the next step.

Run your app:

flutter run -d DEVICE

Try describing a color like "deep ocean blue" or "forest green" and observe the responses. The LLM is attempting to call the functions defined above, but your code isn't detecting function calls yet.

The function calling process

Let's understand what happens when Gemini uses function calling:

- Function selection: The LLM decides if a function call would be helpful based on the user's request

- Parameter generation: The LLM generates parameter values that fit the function's schema

- Function call format: The LLM sends a structured function call object in its response

- Application handling: Your app would receive this call and execute the relevant function (implemented in the next step)

- Response integration: In multi-turn conversations, the LLM expects the function's result to be returned

In the current state of your app, the first three steps are occurring, but you haven't yet implemented step 4 or 5 (handling the function calls), which you'll do in the next step.

Technical details: How Gemini decides when to use functions

Gemini makes intelligent decisions about when to use functions based on:

- User intent: Whether the user's request would be best served by a function

- Function relevance: How well the available functions match the task

- Parameter availability: Whether it can confidently determine parameter values

- System instructions: Guidance from your system prompt about function usage

By providing clear function declarations and system instructions, you've set up Gemini to recognize color description requests as opportunities to call the set_color function.

What's next?

In the next step, you'll implement handlers for the function calls coming from Gemini. This will complete the circle, allowing user descriptions to trigger actual color changes in the UI through the LLM's function calls.

Troubleshooting

Function declaration issues

If you encounter errors with function declarations:

- Check that the parameter names and types match what's expected

- Verify that the function name is clear and descriptive

- Ensure the function description accurately explains its purpose

System prompt problems

If the LLM isn't attempting to use the function:

- Verify that your system prompt clearly instructs the LLM to use the

set_colortool - Check that the example in the system prompt demonstrates function usage

- Try making the instruction to use the tool more explicit

General issues

If you encounter other problems:

- Check the console for any errors related to function declarations

- Verify that the tools are properly passed to the model

- Ensure all Riverpod generated code is up to date

Key concepts learned

- Defining function declarations to extend LLM capabilities in Flutter apps

- Creating parameter schemas for structured data collection

- Integrating function declarations with the Gemini model

- Updating system prompts to encourage function usage

- Understanding how LLMs select and call functions

This step demonstrates how LLMs can bridge the gap between natural language input and structured function calls, laying the groundwork for seamless integration between conversation and application features.

6. Implementing tool handling

In this step, you'll implement handlers for the function calls coming from Gemini. This completes the circle of communication between natural language inputs and concrete application features, allowing the LLM to directly manipulate your UI based on user descriptions.

What you'll learn in this step

- Understanding the complete function calling pipeline in LLM applications

- Processing function calls from Gemini in a Flutter application

- Implementing function handlers that modify application state

- Handling function responses and returning results to the LLM

- Creating a complete communication flow between LLM and UI

- Logging function calls and responses for transparency

Understanding the function calling pipeline

Before diving into implementation, let's understand the complete function calling pipeline:

The end-to-end flow

- User input: User describes a color in natural language (e.g., "forest green")

- LLM processing: Gemini analyzes the description and decides to call the

set_colorfunction - Function call generation: Gemini creates a structured JSON with parameters (red, green, blue values)

- Function call reception: Your app receives this structured data from Gemini

- Function execution: Your app executes the function with the provided parameters

- State update: The function updates your app's state (changing the displayed color)

- Response generation: Your function returns results back to the LLM

- Response incorporation: The LLM incorporates these results into its final response

- UI update: Your UI reacts to the state change, displaying the new color

The complete communication cycle is essential for proper LLM integration. When an LLM makes a function call, it doesn't simply send the request and move on. Instead, it waits for your application to execute the function and return results. The LLM then uses these results to formulate its final response, creating a natural conversation flow that acknowledges the actions taken.

Implement function handlers

Let's update your lib/services/gemini_tools.dart file to add handlers for function calls:

lib/services/gemini_tools.dart

import 'package:colorist_ui/colorist_ui.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

part 'gemini_tools.g.dart';

class GeminiTools {

GeminiTools(this.ref);

final Ref ref;

FunctionDeclaration get setColorFuncDecl => FunctionDeclaration(

'set_color',

'Set the color of the display square based on red, green, and blue values.',

parameters: {

'red': Schema.number(description: 'Red component value (0.0 - 1.0)'),

'green': Schema.number(description: 'Green component value (0.0 - 1.0)'),

'blue': Schema.number(description: 'Blue component value (0.0 - 1.0)'),

},

);

List<Tool> get tools => [

Tool.functionDeclarations([setColorFuncDecl]),

];

Map<String, Object?> handleFunctionCall( // Add from here

String functionName,

Map<String, Object?> arguments,

) {

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

logStateNotifier.logFunctionCall(functionName, arguments);

return switch (functionName) {

'set_color' => handleSetColor(arguments),

_ => handleUnknownFunction(functionName),

};

}

Map<String, Object?> handleSetColor(Map<String, Object?> arguments) {

final colorStateNotifier = ref.read(colorStateNotifierProvider.notifier);

final red = (arguments['red'] as num).toDouble();

final green = (arguments['green'] as num).toDouble();

final blue = (arguments['blue'] as num).toDouble();

final functionResults = {

'success': true,

'current_color':

colorStateNotifier

.updateColor(red: red, green: green, blue: blue)

.toLLMContextMap(),

};

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

logStateNotifier.logFunctionResults(functionResults);

return functionResults;

}

Map<String, Object?> handleUnknownFunction(String functionName) {

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

logStateNotifier.logWarning('Unsupported function call $functionName');

return {

'success': false,

'reason': 'Unsupported function call $functionName',

};

} // To here.

}

@riverpod

GeminiTools geminiTools(Ref ref) => GeminiTools(ref);

Understanding the function handlers

Let's break down what these function handlers do:

handleFunctionCall: A central dispatcher that:- Logs the function call for transparency in the log panel

- Routes to the appropriate handler based on the function name

- Returns a structured response that will be sent back to the LLM

handleSetColor: The specific handler for yourset_colorfunction that:- Extracts RGB values from the arguments map

- Converts them to the expected types (doubles)

- Updates the application's color state using the

colorStateNotifier - Creates a structured response with success status and current color information

- Logs the function results for debugging

handleUnknownFunction: A fallback handler for unknown functions that:- Logs a warning about the unsupported function

- Returns an error response to the LLM

The handleSetColor function is particularly important as it bridges the gap between the LLM's natural language understanding and concrete UI changes.

Update the Gemini chat service to process function calls and responses

Now, let's update the lib/services/gemini_chat_service.dart file to process function calls from the LLM responses and send the results back to the LLM:

lib/services/gemini_chat_service.dart

import 'dart:async';

import 'package:colorist_ui/colorist_ui.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

import '../providers/gemini.dart';

import 'gemini_tools.dart'; // Add this import

part 'gemini_chat_service.g.dart';

class GeminiChatService {

GeminiChatService(this.ref);

final Ref ref;

Future<void> sendMessage(String message) async {

final chatSession = await ref.read(chatSessionProvider.future);

final chatStateNotifier = ref.read(chatStateNotifierProvider.notifier);

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

chatStateNotifier.addUserMessage(message);

logStateNotifier.logUserText(message);

final llmMessage = chatStateNotifier.createLlmMessage();

try {

final response = await chatSession.sendMessage(Content.text(message));

final responseText = response.text;

if (responseText != null) {

logStateNotifier.logLlmText(responseText);

chatStateNotifier.appendToMessage(llmMessage.id, responseText);

}

if (response.functionCalls.isNotEmpty) { // Add from here

final geminiTools = ref.read(geminiToolsProvider);

final functionResultResponse = await chatSession.sendMessage(

Content.functionResponses([

for (final functionCall in response.functionCalls)

FunctionResponse(

functionCall.name,

geminiTools.handleFunctionCall(

functionCall.name,

functionCall.args,

),

),

]),

);

final responseText = functionResultResponse.text;

if (responseText != null) {

logStateNotifier.logLlmText(responseText);

chatStateNotifier.appendToMessage(llmMessage.id, responseText);

}

} // To here.

} catch (e, st) {

logStateNotifier.logError(e, st: st);

chatStateNotifier.appendToMessage(

llmMessage.id,

"\nI'm sorry, I encountered an error processing your request. "

"Please try again.",

);

} finally {

chatStateNotifier.finalizeMessage(llmMessage.id);

}

}

}

@riverpod

GeminiChatService geminiChatService(Ref ref) => GeminiChatService(ref);

Understanding the flow of communication

The key addition here is the complete handling of function calls and responses:

if (response.functionCalls.isNotEmpty) {

final geminiTools = ref.read(geminiToolsProvider);

final functionResultResponse = await chatSession.sendMessage(

Content.functionResponses([

for (final functionCall in response.functionCalls)

FunctionResponse(

functionCall.name,

geminiTools.handleFunctionCall(

functionCall.name,

functionCall.args,

),

),

]),

);

final responseText = functionResultResponse.text;

if (responseText != null) {

logStateNotifier.logLlmText(responseText);

chatStateNotifier.appendToMessage(llmMessage.id, responseText);

}

}

This code:

- Checks if the LLM response contains any function calls

- For each function call, invokes your

handleFunctionCallmethod with the function name and arguments - Collects the results of each function call

- Sends these results back to the LLM using

Content.functionResponses - Processes the LLM's response to the function results

- Updates the UI with the final response text

This creates a round trip flow:

- User → LLM: Requests a color

- LLM → App: Function calls with parameters

- App → User: New color displayed

- App → LLM: Function results

- LLM → User: Final response incorporating function results

Generate Riverpod code

Run the build runner command to generate the needed Riverpod code:

dart run build_runner build --delete-conflicting-outputs

Run and test the complete flow

Now run your application:

flutter run -d DEVICE

Try entering various color descriptions:

- "I'd like a deep crimson red"

- "Show me a calming sky blue"

- "Give me the color of fresh mint leaves"

- "I want to see a warm sunset orange"

- "Make it a rich royal purple"

Now you should see:

- Your message appearing in the chat interface

- Gemini's response appearing in the chat

- Function calls being logged in the log panel

- Function results being logged immediately after

- The color rectangle updating to display the described color

- RGB values updating to show the new color's components

- Gemini's final response appearing, often commenting on the color that was set

The log panel provides insight into what's happening behind the scenes. You'll see:

- The exact function calls Gemini is making

- The parameters it's choosing for each RGB value

- The results your function is returning

- The follow-up responses from Gemini

The color state notifier

The colorStateNotifier you're using to update colors is part of the colorist_ui package. It manages:

- The current color displayed in the UI

- The color history (last 10 colors)

- Notification of state changes to UI components

When you call updateColor with new RGB values, it:

- Creates a new

ColorDataobject with the provided values - Updates the current color in the app state

- Adds the color to the history

- Triggers UI updates through Riverpod's state management

The UI components in the colorist_ui package watch this state and automatically update when it changes, creating a reactive experience.

Understanding error handling

Your implementation includes robust error handling:

- Try-catch block: Wraps all LLM interactions to catch any exceptions

- Error logging: Records errors in the log panel with stack traces

- User feedback: Provides a friendly error message in the chat

- State cleanup: Finalizes the message state even if an error occurs

This ensures the app remains stable and provides appropriate feedback even when issues occur with the LLM service or function execution.

The power of function calling for user experience

What you've accomplished here demonstrates how LLMs can create powerful natural interfaces:

- Natural language interface: Users express intent in everyday language

- Intelligent interpretation: The LLM translates vague descriptions into precise values

- Direct manipulation: The UI updates in response to natural language

- Contextual responses: The LLM provides conversational context about the changes

- Low cognitive load: Users don't need to understand RGB values or color theory

This pattern of using LLM function calling to bridge natural language and UI actions can be extended to countless other domains beyond color selection.

What's next?

In the next step, you'll enhance the user experience by implementing streaming responses. Rather than waiting for the complete response, you'll process text chunks and function calls as they are received, creating a more responsive and engaging application.

Troubleshooting

Function call issues

If Gemini isn't calling your functions or parameters are incorrect:

- Verify your function declaration matches what's described in the system prompt

- Check that parameter names and types are consistent

- Ensure your system prompt explicitly instructs the LLM to use the tool

- Verify the function name in your handler matches exactly what's in the declaration

- Examine the log panel for detailed information on function calls

Function response issues

If function results aren't being properly sent back to the LLM:

- Check that your function returns a properly formatted Map

- Verify that the Content.functionResponses is being constructed correctly

- Look for any errors in the log related to function responses

- Ensure you're using the same chat session for the response

Color display issues

If colors aren't displaying correctly:

- Ensure RGB values are properly converted to doubles (LLM might send them as integers)

- Verify that values are in the expected range (0.0 to 1.0)

- Check that the color state notifier is being called correctly

- Examine the log for the exact values being passed to the function

General problems

For general issues:

- Examine the logs for errors or warnings

- Verify Vertex AI in Firebase connectivity

- Check for any type mismatches in function parameters

- Ensure all Riverpod generated code is up to date

Key concepts learned

- Implementing a complete function calling pipeline in Flutter

- Creating full communication between an LLM and your application

- Processing structured data from LLM responses

- Sending function results back to the LLM for incorporation into responses

- Using the log panel to gain visibility into LLM-application interactions

- Connecting natural language inputs to concrete UI changes

With this step complete, your app now demonstrates one of the most powerful patterns for LLM integration: translating natural language inputs into concrete UI actions, while maintaining a coherent conversation that acknowledges these actions. This creates an intuitive, conversational interface that feels magical to users.

7. Streaming responses for better UX

In this step, you'll enhance the user experience by implementing streaming responses from Gemini. Instead of waiting for the entire response to be generated, you'll process text chunks and function calls as they are received, creating a more responsive and engaging application.

What you'll cover in this step

- The importance of streaming for LLM-powered applications

- Implementing streaming LLM responses in a Flutter application

- Processing partial text chunks as they arrive from the API

- Managing conversation state to prevent message conflicts

- Handling function calls in streaming responses

- Creating visual indicators for in-progress responses

Why streaming matters for LLM applications

Before implementing, let's understand why streaming responses are crucial for creating excellent user experiences with LLMs:

Improved user experience

Streaming responses provide several significant user experience benefits:

- Reduced perceived latency: Users see text start appearing immediately (typically within 100-300ms), rather than waiting several seconds for a complete response. This perception of immediacy dramatically improves user satisfaction.

- Natural conversational rhythm: The gradual appearance of text mimics how humans communicate, creating a more natural dialogue experience.

- Progressive information processing: Users can begin processing information as it arrives, rather than being overwhelmed by a large block of text all at once.

- Opportunity for early interruption: In a full application, users could potentially interrupt or redirect the LLM if they see it going in an unhelpful direction.

- Visual confirmation of activity: The streaming text provides immediate feedback that the system is working, reducing uncertainty.

Technical advantages

Beyond UX improvements, streaming offers technical benefits:

- Early function execution: Function calls can be detected and executed as soon as they appear in the stream, without waiting for the complete response.

- Incremental UI updates: You can update your UI progressively as new information arrives, creating a more dynamic experience.

- Conversation state management: Streaming provides clear signals about when responses are complete vs. still in progress, enabling better state management.

- Reduced timeout risks: With non-streaming responses, long-running generations risk connection timeouts. Streaming establishes the connection early and maintains it.

For your Colorist app, implementing streaming means users will see both text responses and color changes appearing more promptly, creating a significantly more responsive experience.

Add conversation state management

First, let's add a state provider to track whether the app is currently handling a streaming response. Update your lib/services/gemini_chat_service.dart file:

lib/services/gemini_chat_service.dart

import 'dart:async';

import 'package:colorist_ui/colorist_ui.dart';

import 'package:firebase_vertexai/firebase_vertexai.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'package:riverpod_annotation/riverpod_annotation.dart';

import '../providers/gemini.dart';

import 'gemini_tools.dart';

part 'gemini_chat_service.g.dart';

final conversationStateProvider = StateProvider( // Add from here...

(ref) => ConversationState.idle,

); // To here.

class GeminiChatService {

GeminiChatService(this.ref);

final Ref ref;

Future<void> sendMessage(String message) async {

final chatSession = await ref.read(chatSessionProvider.future);

final conversationState = ref.read(conversationStateProvider); // Add this line

final chatStateNotifier = ref.read(chatStateNotifierProvider.notifier);

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

if (conversationState == ConversationState.busy) { // Add from here...

logStateNotifier.logWarning(

"Can't send a message while a conversation is in progress",

);

throw Exception(

"Can't send a message while a conversation is in progress",

);

}

final conversationStateNotifier = ref.read(

conversationStateProvider.notifier,

);

conversationStateNotifier.state = ConversationState.busy; // To here.

chatStateNotifier.addUserMessage(message);

logStateNotifier.logUserText(message);

final llmMessage = chatStateNotifier.createLlmMessage();

try { // Modify from here...

final responseStream = chatSession.sendMessageStream(

Content.text(message),

);

await for (final block in responseStream) {

await _processBlock(block, llmMessage.id);

} // To here.

} catch (e, st) {

logStateNotifier.logError(e, st: st);

chatStateNotifier.appendToMessage(

llmMessage.id,

"\nI'm sorry, I encountered an error processing your request. "

"Please try again.",

);

} finally {

chatStateNotifier.finalizeMessage(llmMessage.id);

conversationStateNotifier.state = ConversationState.idle; // Add this line.

}

}

Future<void> _processBlock( // Add from here...

GenerateContentResponse block,

String llmMessageId,

) async {

final chatSession = await ref.read(chatSessionProvider.future);

final chatStateNotifier = ref.read(chatStateNotifierProvider.notifier);

final logStateNotifier = ref.read(logStateNotifierProvider.notifier);

final blockText = block.text;

if (blockText != null) {

logStateNotifier.logLlmText(blockText);

chatStateNotifier.appendToMessage(llmMessageId, blockText);

}

if (block.functionCalls.isNotEmpty) {

final geminiTools = ref.read(geminiToolsProvider);

final responseStream = chatSession.sendMessageStream(

Content.functionResponses([

for (final functionCall in block.functionCalls)

FunctionResponse(

functionCall.name,

geminiTools.handleFunctionCall(

functionCall.name,

functionCall.args,

),

),

]),

);

await for (final response in responseStream) {

final responseText = response.text;

if (responseText != null) {

logStateNotifier.logLlmText(responseText);

chatStateNotifier.appendToMessage(llmMessageId, responseText);

}

}

}

} // To here.

}

@riverpod

GeminiChatService geminiChatService(Ref ref) => GeminiChatService(ref);

Understanding the streaming implementation

Let's break down what this code does:

- Conversation state tracking:

- A

conversationStateProvidertracks whether the app is currently processing a response - The state transitions from

idle→busywhile processing, then back toidle - This prevents multiple concurrent requests that could conflict

- A

- Stream initialization:

sendMessageStream()returns a Stream of response chunks instead of a Future with the complete response- Each chunk may contain text, function calls, or both

- Progressive processing:

await forprocesses each chunk as it arrives in real-time- Text is appended to the UI immediately, creating the streaming effect

- Function calls are executed as soon as they're detected

- Function call handling:

- When a function call is detected in a chunk, it's executed immediately

- Results are sent back to the LLM through another streaming call

- The LLM's response to these results is also processed in a streaming fashion

- Error handling and cleanup:

try/catchprovides robust error handling- The

finallyblock ensures conversation state is reset properly - Message is always finalized, even if errors occur

This implementation creates a responsive, reliable streaming experience while maintaining proper conversation state.

Update the main screen to connect conversation state

Modify your lib/main.dart file to pass the conversation state to the main screen:

lib/main.dart

import 'package:colorist_ui/colorist_ui.dart';

import 'package:flutter/material.dart';

import 'package:flutter_riverpod/flutter_riverpod.dart';

import 'providers/gemini.dart';

import 'services/gemini_chat_service.dart';

void main() async {

runApp(ProviderScope(child: MainApp()));

}

class MainApp extends ConsumerWidget {

const MainApp({super.key});

@override

Widget build(BuildContext context, WidgetRef ref) {

final model = ref.watch(geminiModelProvider);

final conversationState = ref.watch(conversationStateProvider); // Add this line

return MaterialApp(

theme: ThemeData(

colorScheme: ColorScheme.fromSeed(seedColor: Colors.deepPurple),

),

home: model.when(

data:

(data) => MainScreen(

conversationState: conversationState, // And this line

sendMessage: (text) {

ref.read(geminiChatServiceProvider).sendMessage(text);

},

),

loading: () => LoadingScreen(message: 'Initializing Gemini Model'),

error: (err, st) => ErrorScreen(error: err),

),

);

}

}

The key change here is passing the conversationState to the MainScreen widget. The MainScreen (provided by the colorist_ui package) will use this state to disable the text input while a response is being processed.

This creates a cohesive user experience where the UI reflects the current state of the conversation.

Generate Riverpod code

Run the build runner command to generate the needed Riverpod code:

dart run build_runner build --delete-conflicting-outputs

Run and test streaming responses

Run your application:

flutter run -d DEVICE

Now try testing the streaming behavior with various color descriptions. Try descriptions like:

- "Show me the deep teal color of the ocean at twilight"

- "I'd like to see a vibrant coral that reminds me of tropical flowers"

- "Create a muted olive green like old army fatigues"

The streaming technical flow in detail

Let's examine exactly what happens when streaming a response:

Connection establishment

When you call sendMessageStream(), the following happens:

- The app establishes a connection to the Vertex AI service

- The user request is sent to the service

- The server begins processing the request

- The stream connection remains open, ready to transmit chunks

Chunk transmission

As Gemini generates content, chunks are sent through the stream:

- The server sends text chunks as they're generated (typically a few words or sentences)

- When Gemini decides to make a function call, it sends the function call information

- Additional text chunks may follow function calls

- The stream continues until the generation is complete

Progressive processing

Your app processes each chunk incrementally:

- Each text chunk is appended to the existing response

- Function calls are executed as soon as they're detected

- The UI updates in real-time with both text and function results

- State is tracked to show the response is still streaming

Stream completion

When the generation is complete:

- The stream is closed by the server

- Your

await forloop exits naturally - The message is marked as complete

- The conversation state is set back to idle

- The UI updates to reflect the completed state

Streaming vs. non-streaming comparison

To better understand the benefits of streaming, let's compare streaming vs. non-streaming approaches:

Aspect | Non-Streaming | Streaming |

Perceived latency | User sees nothing until complete response is ready | User sees first words within milliseconds |

User experience | Long wait followed by sudden text appearance | Natural, progressive text appearance |

State management | Simpler (messages are either pending or complete) | More complex (messages can be in a streaming state) |

Function execution | Occurs only after complete response | Occurs during response generation |

Implementation complexity | Simpler to implement | Requires additional state management |

Error recovery | All-or-nothing response | Partial responses may still be useful |

Code complexity | Less complex | More complex due to stream handling |

For an application like Colorist, the UX benefits of streaming outweigh the implementation complexity, especially for color interpretations that might take several seconds to generate.

Best practices for streaming UX

When implementing streaming in your own LLM applications, consider these best practices:

- Clear visual indicators: Always provide clear visual cues that distinguish streaming vs. complete messages

- Input blocking: Disable user input during streaming to prevent multiple overlapping requests

- Error recovery: Design your UI to handle graceful recovery if streaming is interrupted

- State transitions: Ensure smooth transitions between idle, streaming, and complete states

- Progress visualization: Consider subtle animations or indicators that show active processing

- Cancellation options: In a complete app, provide ways for users to cancel in-progress generations

- Function result integration: Design your UI to handle function results appearing mid-stream

- Performance optimization: Minimize UI rebuilds during rapid stream updates

The colorist_ui package implements many of these best practices for you, but they're important considerations for any streaming LLM implementation.

What's next?

In the next step, you'll implement LLM synchronization by notifying Gemini when users select colors from history. This will create a more cohesive experience where the LLM is aware of user-initiated changes to the application state.

Troubleshooting

Stream processing issues

If you encounter issues with stream processing:

- Symptoms: Partial responses, missing text, or abrupt stream termination

- Solution: Check network connectivity and ensure proper async/await patterns in your code

- Diagnosis: Examine the log panel for error messages or warnings related to stream processing

- Fix: Ensure all stream processing uses proper error handling with

try/catchblocks

Missing function calls

If function calls aren't being detected in the stream:

- Symptoms: Text appears but colors don't update, or log shows no function calls

- Solution: Verify the system prompt's instructions about using function calls

- Diagnosis: Check the log panel to see if function calls are being received

- Fix: Adjust your system prompt to more explicitly instruct the LLM to use the

set_colortool

General error handling

For any other issues:

- Step 1: Check the log panel for error messages

- Step 2: Verify Vertex AI in Firebase connectivity

- Step 3: Ensure all Riverpod generated code is up to date

- Step 4: Review the streaming implementation for any missing await statements

Key concepts learned

- Implementing streaming responses with the Gemini API for more responsive UX

- Managing conversation state to handle streaming interactions properly

- Processing real-time text and function calls as they arrive

- Creating responsive UIs that update incrementally during streaming

- Handling concurrent streams with proper async patterns